Neural Networks

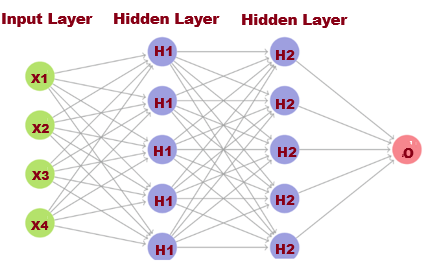

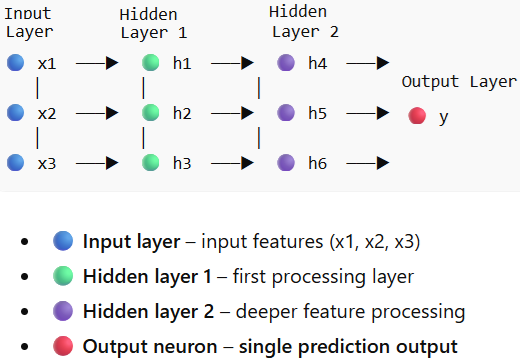

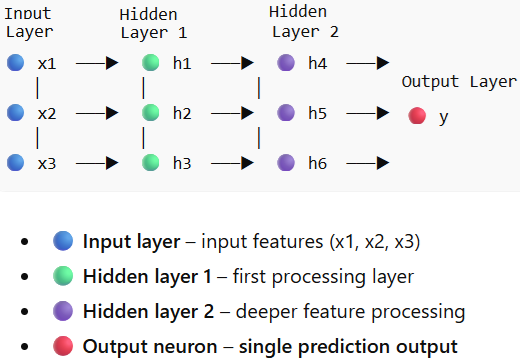

Inspired by the way real neurons in the human brain cooperate to process information, neural networks are computer models. These networks are made up of interconnected nodes, or artificial neurons, arranged into three levels: an output layer, an input layer, and one or more hidden layers.

In a neural network, each node performs a computation similar to linear regression. The inputs are multiplied by their corresponding weights, a bias term is added, and the result is passed through an activation function to produce the node’s output.

The input layer of a neural network is in charge of getting the original data. A bias term is added to the outcome after each input value is multiplied by the associated weight. After that, an activation function is used to the weighted sum to get an output.

One or more hidden layers may exist between the input and output layers, where the output from one layer serves as the input for the subsequent layer.

The network's final prediction or outcome is ultimately produced by the output layer.

Activation Function

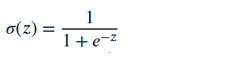

The network can learn and represent intricate data patterns thanks to activation functions, which provide it non-linearity. In a neural network, an activation function is a mathematical function that adds non-linearity to the model by determining a neuron's output based on its input. No matter how many layers the network has, it would just be a linear model without this non-linearity, which enables the network to learn intricate patterns. They are critical for tasks like picture classification and natural language processing, as well as for making predictions on complicated data like text or images.

How the Activation Function Operates

Inputs are sent into a neuron, multiplied by weights, and then summed with a bias term. After that, the activation function is applied to this sum, changing the value. The ultimate output for that neuron is the function's output, which is subsequently sent to the subsequent layer.

Types of activation functions

Rectified Linear Unit (ReLU): A common choice for hidden layers, it outputs the input directly if it's positive, and zero otherwise.

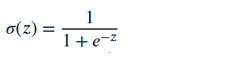

Sigmoid: Squashes the output to a range between 0and 1. This function is widely used in machine learning as an activation function in neural networks and in logistic regression to convert scores into probabilities, providing a bounded and interpretable output.

Hyperbolic Tangent (Tanh):

Hyperbolic Tangent (Tanh): Similar to sigmoid but squashes the output to a range between \(-1\) and \(1\).

Softmax: Typically used in the output layer for multi-class classification problems, as it converts the output values into probabilities that sum to \(1\).

Loss Function

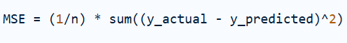

The difference between a neural network's anticipated output and the actual, or ground truth, output is measured by a loss function, sometimes referred to as a cost function. It gives a numerical indicator of the model's performance, with lower loss values denoting superior performance. Minimizing this loss function is the aim of neural network training, usually using optimization techniques like gradient descent.

The particular job that the neural network is executing determines which loss function to use:

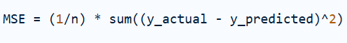

1. Regression Loss Functions (for predicting continuous values):

Mean Squared Error (MSE) / L2 Loss.

Calculates the average of the squared differences between predicted and actual values.

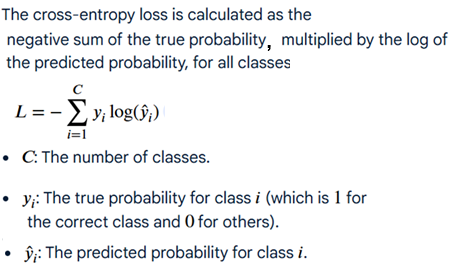

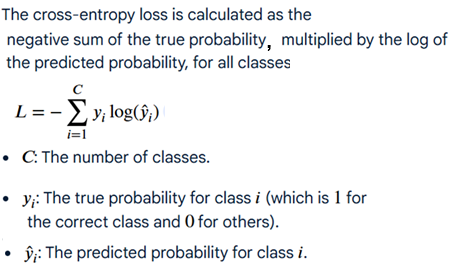

2. Cross-entropy Loss Function.

Cross-entropy is a common choice for classification tasks, particularly with softmax

Types of Neural Networks

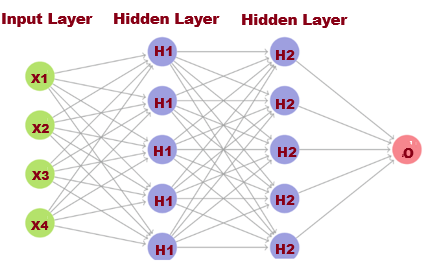

1. Feedforward Neural Networks (FNN)

One of the most basic architectures in machine learning and artificial intelligence is the feedforward neural network (FNN). Because there are no loops or cycles and information flows only forward from input to output, it is known as feedforward.

Many contemporary models used in tasks like classification, regression, pattern recognition, and prediction are built around FNNs.

An FNN is composed of layers of neurons- Input Layer, Hidden Layer(s), Output Layer.

Input Layer

Input Layer

The input layer receives raw data for example image pixels,numerical data

If a dataset has n features, the input layer has n neurons.

Hidden Layer

FNN can have multiple hidden layers that allow the network to learn complex nonlinear relationships.

Networks with many hidden layers belong to Deep Learning.

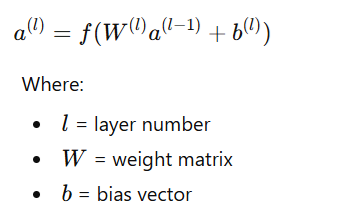

Each neuron computes

Output Layer

Output Layer

The output layer produces the final prediction.

Task Output

Binary classification- 1 neuron

Multi-class classification- n neurons

Regression- 1 neuron

2. Recurrent Neural Networks (RNN)

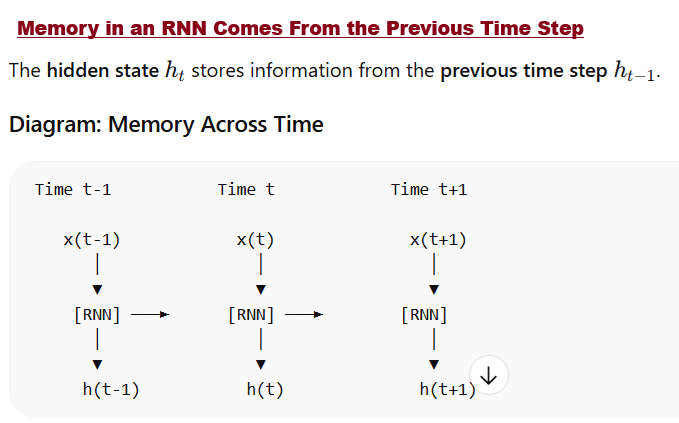

Neural networks that process sequential or time-dependent data are known as recurrent neural networks (RNNs). An RNN, in contrast to a feedforward network, contains connections that loop back, enabling data from earlier stages to affect present predictions. Because of this, RNNs are very helpful in fields like time-series forecasting, speech recognition, and natural language processing.

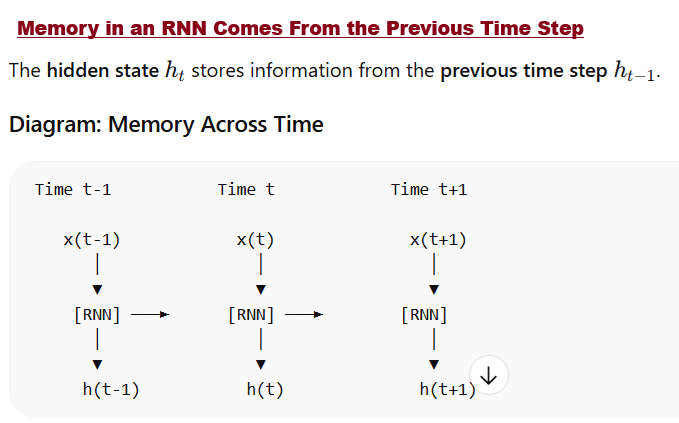

In a standard Recurrent Neural Network (RNN), the memory is stored across time steps, not across layers. The hidden state passes information from the previous time step to the current time step, not from previous layers.RNN memory is stored in the hidden state across time steps. Each step uses current input + previous hidden state.

Think of RNN memory like reading a sentence. Example sentence:

"

I love neural networks"

When reading the word “networks”, the model remembers “I love neural” from previous time steps.

That memory is stored in the hidden state.

3.Convolutional Neural Networks (CNN)

One kind of neural network created especially to handle grid-like input, particularly images, is the convolutional neural network (CNN). From photos, CNNs automatically pick up spatial characteristics like edges, contours, and objects. CNNs are frequently utilized in computer vision and deep learning applications, such as object detection, video analysis, and picture recognition.

Real-World Use Cases of CNN

1. Image Classification

Used to identify objects in images like identifying animals, classifying plants, recognizing products

2. Facial Recognition

CNNs are widely used in phone unlocking, security systems, surveillance

3. Self-Driving Cars

CNNs help autonomous vehicles detect like

pedestrians, traffic signs,lanes,obstacles. It is used in systems developed by Tesla.

4. Medical Image Analysis

CNNs help detect diseases in:

X-rays

MRI scans

CT scans

Example:

tumor detection

cancer diagnosis

5. Object Detection

CNN models can locate objects in images.

Example tasks:

Image → Detect car, person, bicycle

Used in surveillance and robotics.

6. Video Analysis

CNNs analyze frames in videos for:

action recognition

security monitoring

sports analytics

7. Document Processing

CNNs help with:

handwriting recognition

digit recognition

text detection

Example dataset:

MNIST (handwritten digits).