|

|

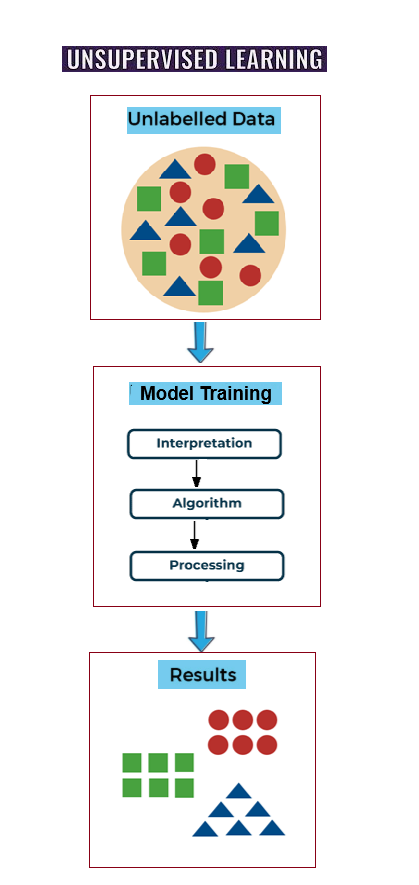

Since unlabeled data is used in unsupervised learning, there are no predetermined results. The program independently identifies linkages, groups, and hidden patterns in the data. Data visualization, dimensionality reduction, and clustering are its primary applications. It is frequently used in fraud detection, recommendation systems, and marketing, where patterns are more important than labels.

Machine learning algorithms, also known as self-learning or algorithms, are trained on unlabeled data sets—that is, input data that has not been classified—in unsupervised learning. The appropriate algorithms are selected for training based on the tasks, or machine learning problems like associations, clustering, etc., and the data sets

The algorithms learn and deduce their own rules throughout the training phase based on the similarities, patterns, and contrasts of the data points. The algorithms do not require pre-training or labels (goal values) in order to learn. A machine learning model is the result of this algorithm's training process using data sets. The model is an unsupervised machine learning model since the data sets are unlabeled (no target values, no human supervision). The model is now prepared to carry out unsupervised learning tasks including dimensionality reduction, grouping, and association. Complex tasks, such as clustering huge datasets, are well suited for unsupervised learning models.

Applications for unsupervised learning are numerous and span many fields and industries. Among the main uses are:

Client Segmentation: Algorithms group clients according to their purchasing habits or demography, making it possible to use focused marketing tactics.

Anomaly detection: It helps detect fraud by finding odd patterns in data. cybersecurity and preventing device malfunctions.

Recommendation systems: Make recommendations for goods, films, or music based on analysis

user preferences and behavior.

Image and Text Clustering: Assembles related documents or images for purposes such as arrangement, categorization, or suggestion of content.

Social Network Analysis: Identifies user interaction patterns or communities on social media networks.

1. There is no requirement for labeled data because it operates with unlabeled, raw data, saving time and effort when it comes to data annotation.

2. Finds hidden patterns: Identifies organic structures and groupings that people would overlook.

3. Handles huge and complicated datasets: Works well with large or high-dimensional data sets.

4. Beneficial for anomaly detection: Able to spot outliers and odd data points without the need for previous instances.

By separating data points into clusters according to similarities, clustering algorithms identify organic groupings within unlabeled data.

K-Means Clustering:

Data is divided into a predefined number (K) of clusters using K-means clustering, which is one of the most popular and widely used clustering algorithms. Each data point is assigned to the cluster with the closest mean (centroid).

Hierarchical Clustering:By starting with a single, large cluster and separating it (divisive) or starting with individual data points and integrating them (agglomerative), hierarchical clustering generates a hierarchy of clusters.

DBSCAN (Density-Based Spatial Clustering of Applications with Noise):Because it detects clusters in dense regions of data points and classifies sparse points as noise, the technique known as Density-Based Spatial Clustering of Applications with Noise, or DBSCAN, performs well with data that contains irregular shapes and outliers.

Gaussian Mixture Models (GMMs)A data point may belong to many clusters with varying probabilities when using Gaussian combination models (GMMs), which are probabilistic models that offer "soft" clustering. They accomplish this by making the assumption that a variety of Gaussian distributions combine to produce data points.

These methods speed up training procedures, aid in data visualization, and reduce the amount of features or variables in a dataset while preserving crucial information.

PCA:Data is transformed into a new collection of uncorrelated variables (principal components) that represent the highest variation in the data using Principal Component Analysis (PCA), arguably the most popular dimensionality reduction technique.

ICA:

The "cocktail party problem" (i.e., separating voices in a noisy room) is a well-known application of Independent Component Analysis (ICA), a technique that divides a multivariate signal into additive subcomponents that are statistically independent from one another.