|

PythonPlaza -

Python & AI

|

Supervised Machine Learning Algorithms

K-Nearest Neighbors

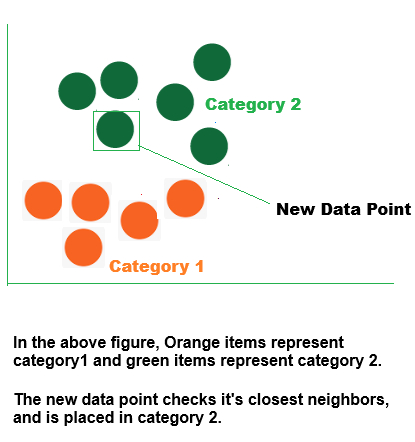

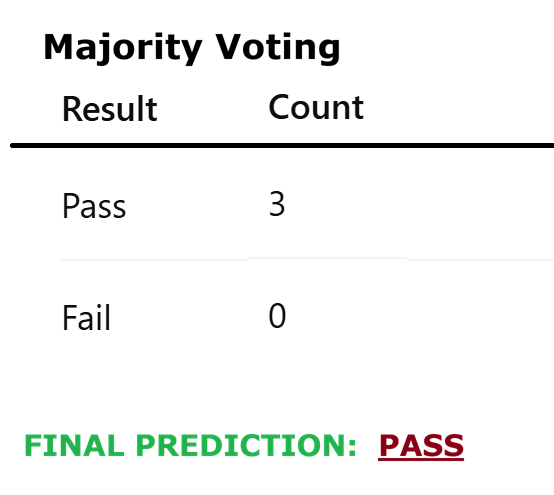

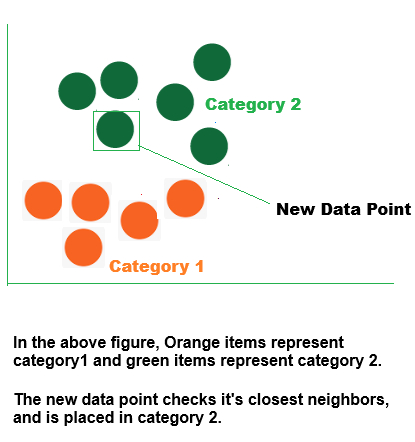

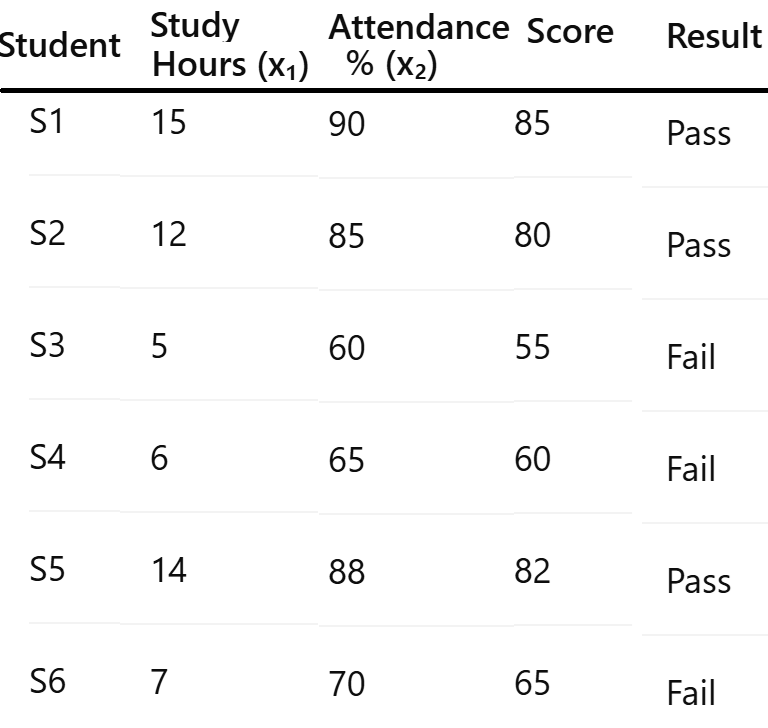

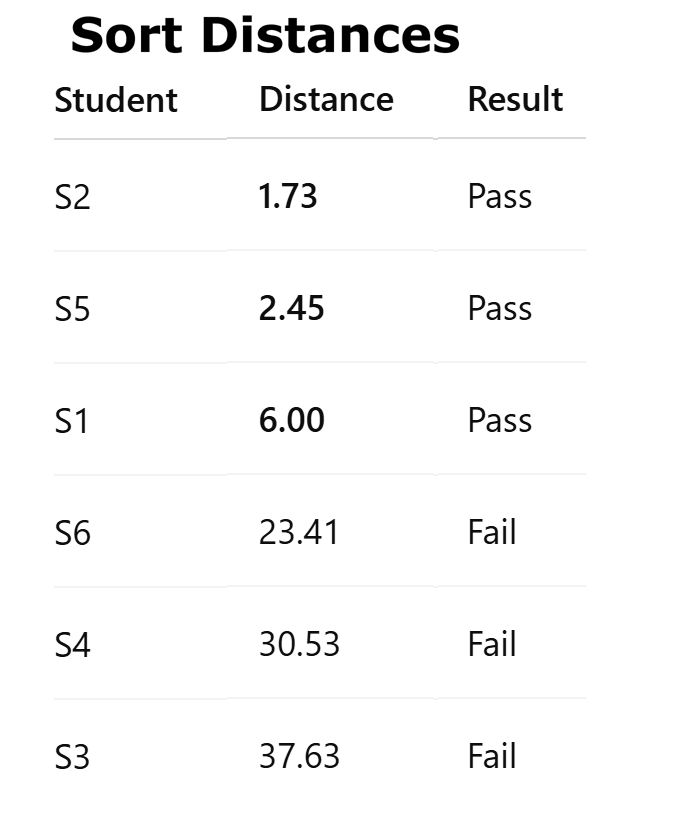

Although it can be applied to regression tasks, K-Nearest Neighbors (KNN) is a supervised machine learning technique that is mostly employed for classification. It produces predictions based on the average value (for regression) or the majority class (for classification) after locating the "k" nearest data points (neighbors) to a given input. KNN is an instance-based and non-parametric learning technique since it does not assume anything about the distribution of the underlying data.

K-Nearest Neighbors is also known as a lazy learner algorithm because, rather than learning from the training set right away, it retains the dataset and acts upon it during classification.

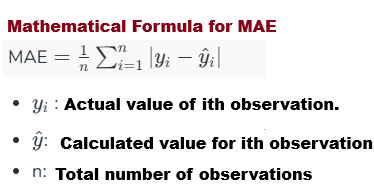

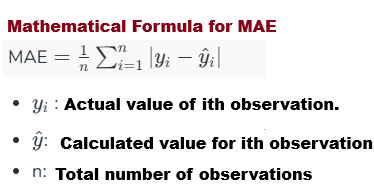

MAE (Mean Absolute Error) quantifies the average magnitude of errors for KNN regression.

Mean Absolute Error

It calculates the average discrepancy between a dataset's actual and forecasted values. Without taking direction into account, it displays the deviation between predicted and actual values.

1. Determined by utilizing absolute differences

2. Easy to calculate and understand

3. Handles every mistake equally

4. Not as susceptible to significant errors as MSE

5. Frequently employed to assess regression models

What does "K" stand for in "K Nearest Neighbor"?

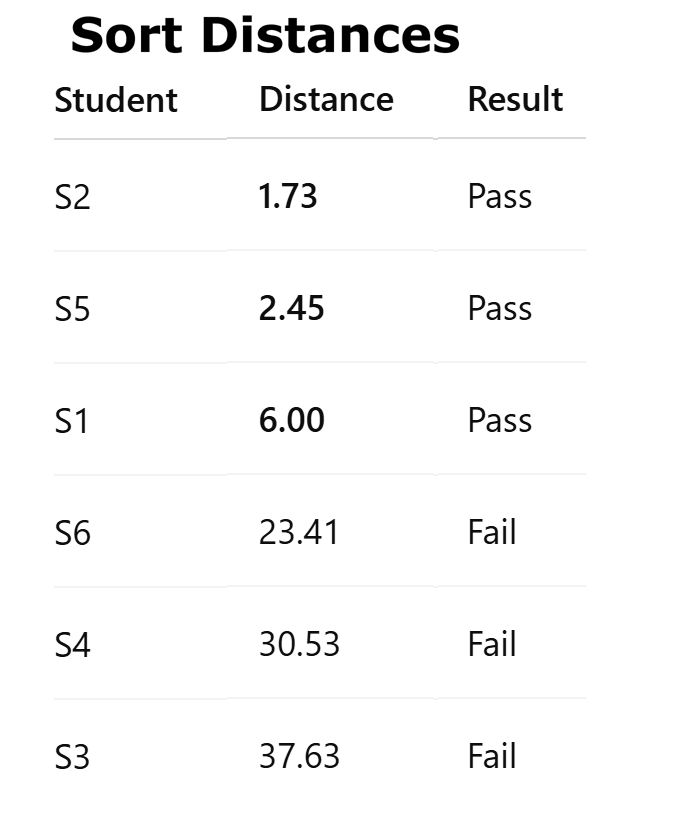

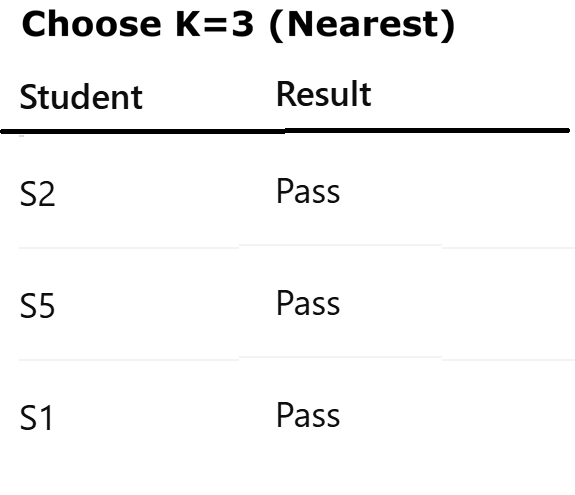

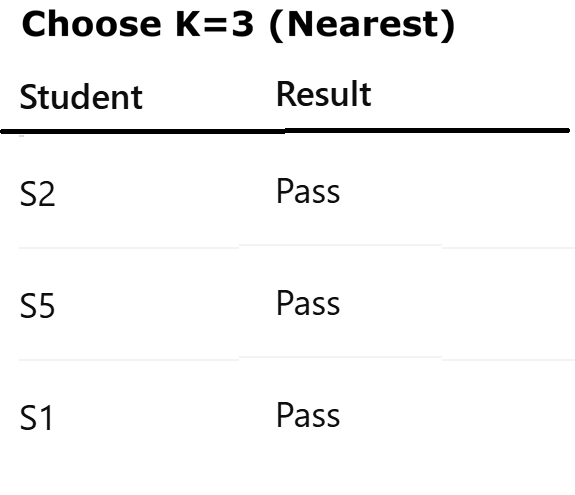

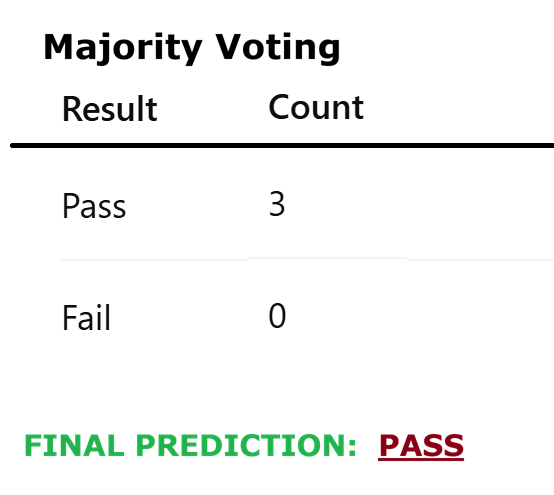

The number k in the k-Nearest Neighbors method simply indicates how many neighboring points or points the algorithm should consider while making a conclusion.

For instance, let us say you are determining the type of fruit based on its size and shape. You make comparisons to familiar fruits.

•The algorithm examines the three fruits that are closest to the new one if k = 3.

•The algorithm determines that the new fruit is a mango because the majority of its neighbors are mangos if two of those three fruits are mangos and one is a banana.

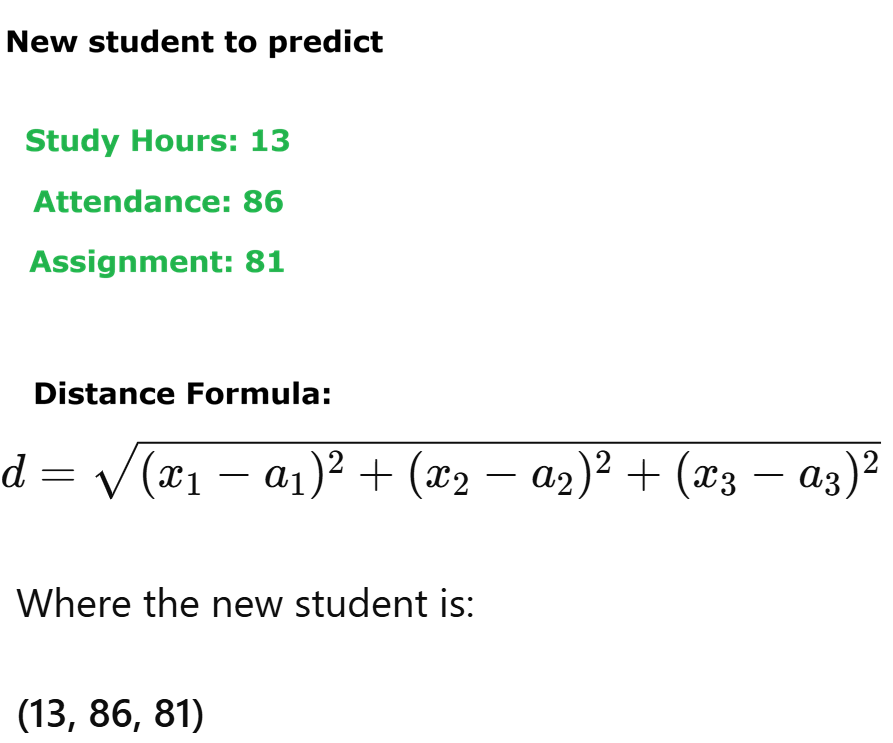

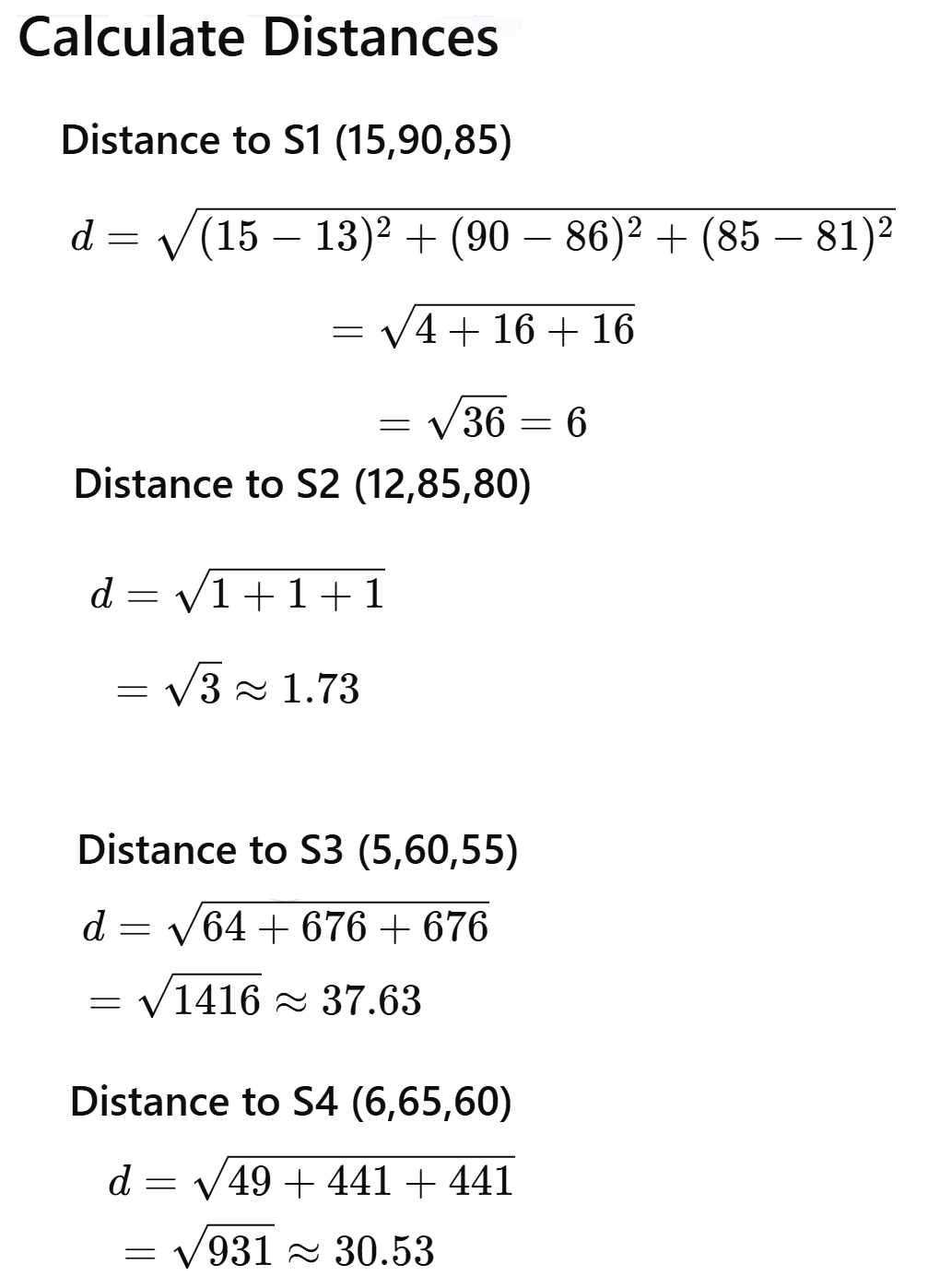

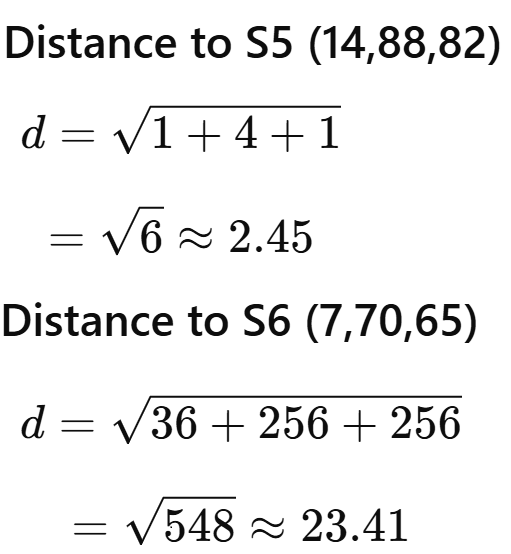

Euclidean Distance

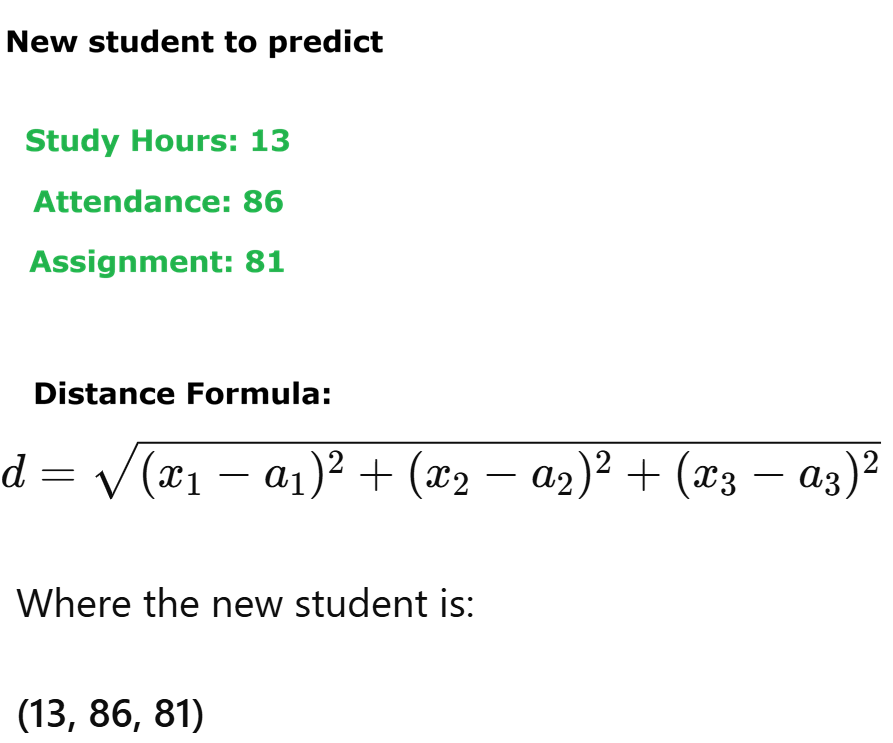

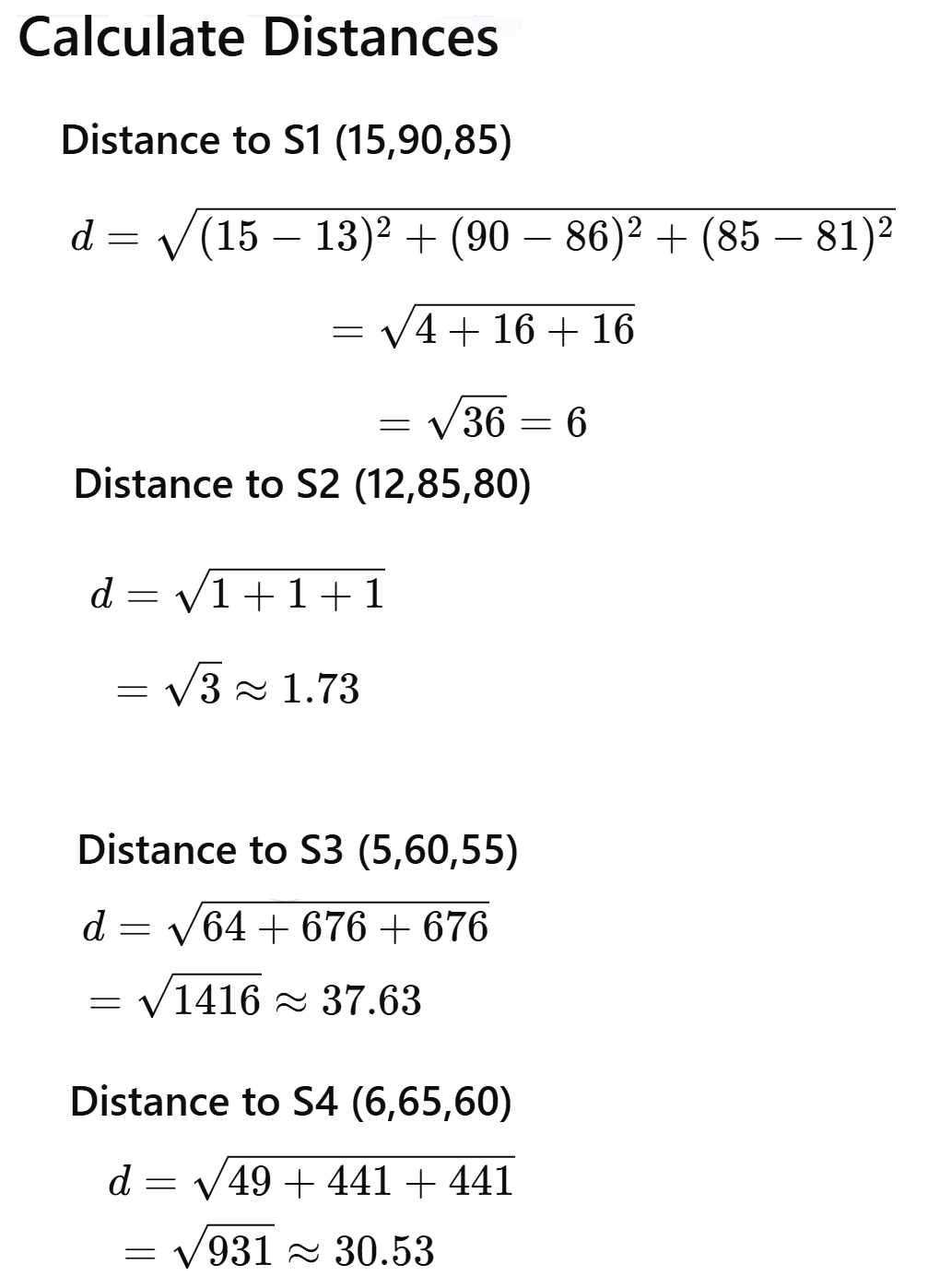

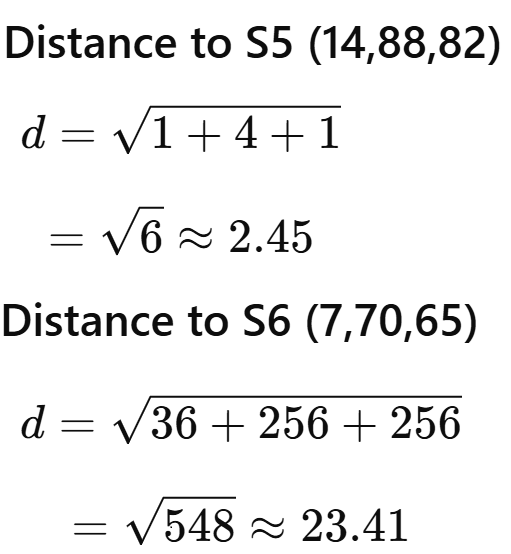

Euclidean distance is the most common distance metric used in KNN. For two points, (x1, y1) and (x2, y2), the Euclidean distance is:

√((x2 - x1)² + (y2 - y1)²).

USE CASE 1: Use K-Nearest Neighbors (KNN) with scikit-learn if a loan will default or not. Dependent variable: Default (0 = No Default, 1 = Default) Independent variables (3): Income (monthly income, e.g., 1000–10000) CreditScore (300–850) LoanAmount (1000–50000).

USE CASE 1: Use K-Nearest Neighbors (KNN) with scikit-learn if a loan will default or not. Dependent variable: Default (0 = No Default, 1 = Default) Independent variables (3): Income (monthly income, e.g., 1000–10000) CreditScore (300–850) LoanAmount (1000–50000).

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.metrics import accuracy_score, confusion_matrix, classification_report

# -----------------------------------

# 1. Load data from Excel

# -----------------------------------

data = pd.read_excel("loan_data.xlsx")

df = pd.DataFrame(data)

print("Dataset Preview:")

print(data.head())

# -----------------------------------

# 2. Define features and target

# -----------------------------------

X = df[["Income", "CreditScore", "LoanAmount"]]

y = df["Default"]

# -----------------------------------

# 3. Split into training and testing

# -----------------------------------

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.25, random_state=42, stratify=y

)

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

# -----------------------------------

# 4. Train the KNN model

# -----------------------------------

knn_model = KNeighborsClassifier(

n_neighbors=5, # K value

metric='euclidean'

)

knn_model.fit(X_train_scaled, y_train)

y_pred = knn_model.predict(X_test_scaled)

#Evaluate the model

print("Accuracy:", accuracy_score(y_test, y_pred))

print("\nConfusion Matrix:\n", confusion_matrix(y_test, y_pred))

print("\nClassification Report:\n", classification_report(y_test, y_pred))

# Predict default for a new customer

new_customer = [[4500, 620, 16000]] # Income, CreditScore, LoanAmount

default_prediction = svm_model.predict(new_customer)

default_probability = knn_model.predict_proba(new_customer)[0][1]

print("Default Prediction:", default_prediction[0])

print("Probability of Default:", default_probability)

USE CASE 2: Customer Churn example using K-Nearest Neighbors (KNN) with scikit-learn in Python. We’ll assume 4 independent variables, for example: Tenure (months with company) - 1–60 months MonthlyCharges (amount billed per month) - 30–120 ContractType (0=Month-to-month, 1=One-year, 2=Two-year) SupportCalls (number of calls to support) 0–10 The dependent variable is Churn (0=Stay, 1=Churn)..

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.metrics import accuracy_score, confusion_matrix, classification_report

# -----------------------------------

# 1. Load data from Excel

# -----------------------------------

#sample data can be exported to

#excel from the URL

# https://www.pythonplaza.com/categorical_customer_churn_1_or_0.html

data = pd.read_excel("customer_data.xlsx")

print("Dataset Preview:")

print(data.head())

# -----------------------------------

# 2. Define features and target

# -----------------------------------

X = df[["Tenure", "MonthlyCharges", "ContractType", "SupportCalls"]]

y = df["Churn"]

# -----------------------------------

# 3. Split into training and testing

# -----------------------------------

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.25, random_state=42

)

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

# -----------------------------------

# 4. Train the KNN model

# -----------------------------------

knn_model = KNeighborsClassifier(

n_neighbors=5, # K value

metric='euclidean'

)

knn_model.fit(X_train_scaled, y_train)

y_pred = knn_model.predict(X_test_scaled)

# -----------------------------------

# Evaluate the model

# -----------------------------------

print("Accuracy:", accuracy_score(y_test, y_pred))

print("\nConfusion Matrix:\n", confusion_matrix(y_test, y_pred))

print("\nClassification Report:\n", classification_report(y_test, y_pred))

#Predict churn for a new customer

new_customer = [[8, 92, 0, 5]]

# Tenure, MonthlyCharges, ContractType, SupportCalls

churn_prediction = knn_model.predict(new_customer)

churn_probability = knn_model.predict_proba(new_customer)[0][1]

print("Churn Prediction:", churn_prediction[0])

print("Probability of Churn:", churn_probability)

#Interpreting the results (business view)

1 → High risk of churn ⚠️

0 → Likely to stay ✅

Use probability (e.g., churn > 0.6) to trigger retention offers

USE CASE 3: Use K-Nearest Neighbors (KNN) to determine what learning style a student prefers -

Visual, Auditory, Reading/Writing, Kinesthetic (Dependent Variable)

Independent variables (How a student prefers to learn)

prefers_diagrams – How much a student likes diagrams (1-5)

prefers_lectures – How much a student likes lectures (1-5)

prefers_notes – How much a student likes reading/writing notes (1-5)

prefers_hands_on – How much a student likes hands-on activities (1-5)

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.metrics import accuracy_score, confusion_matrix, classification_report

# -----------------------------------

# 1. Load data from Excel

# -----------------------------------

#sample data can be exported to

#excel from the URL

Get the Categorical learning Styles data in Excel

data = pd.read_excel("Categorical_learning_Styles.xlsx")

print("Dataset Preview:")

print(data.head())

# -----------------------------------

# 2. Define the data

# -----------------------------------

X = df[['prefers_diagrams', 'prefers_lectures',

'prefers_notes', 'prefers_hands_on']]

y = df['learning_style']

# Encode categorical target labels

le = LabelEncoder()

y_encoded = le.fit_transform(y)

# -----------------------------

# 3. Train-Test Split

# -----------------------------

X_train, X_test, y_train, y_test = train_test_split(

X, y_encoded, test_size=0.3, random_state=42

)

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

# -----------------------------------

# 4. Train the KNN model

# -----------------------------------

knn_model = KNeighborsClassifier(

n_neighbors=5, # K value

metric='euclidean'

)

knn_model.fit(X_train_scaled, y_train)

y_pred = knn_model.predict(X_test_scaled)

#Evaluate the model

print("Accuracy:", accuracy_score(y_test, y_pred))

print("\nConfusion Matrix:\n", confusion_matrix(y_test, y_pred))

print("\nClassification Report:\n", classification_report(y_test, y_pred))

#Predict with sample data

new_students = np.array([

[5, 1, 2, 1], # Likely Visual

[1, 5, 3, 2], # Likely Auditory

[2, 1, 5, 2], # Likely Reading/Writing

[1, 2, 1, 5] # Likely Kinesthetic

])

# Predict encoded labels

predictions_encoded = knn_model.predict(new_students)

# Convert numeric predictions back to original labels

predictions = le.inverse_transform(predictions_encoded)

print("Predicted Learning Styles:")

print(predictions)

USE CASE 4: Use K-Nearest Neighbors (KNN) to predict if a person has a disease. Age, BloodPressure, Cholesterol, FamilyHistory, are independent variables and Disease is dependent variables.

import numpy as np

import pandas as pd

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.neighbors import KNeighborsClassifier

from sklearn.metrics import accuracy_score, confusion_matrix, classification_report

# -----------------------------------

# 1. Load data from Excel

# -----------------------------------

#sample data can be exported to

#excel from the URL

Get Disease Classification in Excel

data = pd.read_excel("patient_dosage_response.xlsx")

print("Dataset Preview:")

print(data.head())

df = pd.DataFrame(data)

# ----------------------------------

# 2. Separate Features and Target

# ----------------------------------

X = df[['Age', 'BloodPressure', 'Cholesterol', 'FamilyHistory']]

y = df['Disease']

# ----------------------------------

# 3. Train-Test Split

# ----------------------------------

X_train, X_test, y_train, y_test = train_test_split(

X, y, test_size=0.3, random_state=42

)

scaler = StandardScaler()

X_train_scaled = scaler.fit_transform(X_train)

X_test_scaled = scaler.transform(X_test)

# -----------------------------------

# 4. Train the KNN model

# -----------------------------------

knn_model = KNeighborsClassifier(

n_neighbors=5, # K value

metric='euclidean'

)

knn_model.fit(X_train_scaled, y_train)

y_pred = knn_model.predict(X_test_scaled)

#Evaluate the model

print("Accuracy:", accuracy_score(y_test, y_pred))

print("\nConfusion Matrix:\n", confusion_matrix(y_test, y_pred))

print("\nClassification Report:\n", classification_report(y_test, y_pred))

#Predict with New Sample Data (NumPy Array)

# New patient data

# Format: [Age, BloodPressure, Cholesterol, FamilyHistory]

new_patients = np.array([

[45, 150, 230, 1], # High risk

[28, 118, 175, 0] # Low risk

])

predictions = knn_model.predict(new_patients)

print("Disease Predictions:")

print(predictions)

About Us | Contact Us | Sitemap | Privacy Policy